|

|

|

About Me

I am currently a PhD student in VRLAB of BUAA, supervised by Prof. Xukun Shen. I received my BS degree in mathematics from College of Mathematics & Econometrics, Hunan University, in 2007. Afterwards in the struggling for a master degree from BUAA, I spent 2 years working with Internet Graphics Group of MSRA & Computer Science Department of UIUC on research project of deformation in computer graphics. Now my research interests include virtual reality, computer animation & deformation, computational geometry and GPU computing.

Projects and Publications

|

|

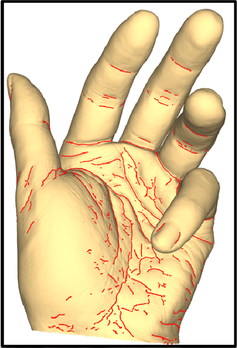

Detail-Preserving Controllable Deformation from Sparse Examples,

Haoda Huang, KangKang Yin, Ling Zhao, Yue Qi, Yizhou Yu, Xin Tong. IEEE Transactions on Visualization and Computer Graphics (TVCG), Volume: 18, Issue: 8, August 2012, pages 1215-1227. Abstract: Recent advances in laser scanning technology have made it possible to faithfully scan a real object with tiny geometric details, such as pores and wrinkles. However, a faithful digital model should not only capture static details of the real counterpart but also be able to reproduce the deformed versions of such details. In this paper, we develop a data-driven model that has two components; the first accommodates smooth large-scale deformations and the second captures high-resolution details. Large-scale deformations are based on a nonlinear mapping between sparse control points and bone transformations. A global mapping, however, would fail to synthesize realistic geometries from sparse examples, for highly-deformable models with a large range of motion. The key is to train a collection of mappings defined over regions locally in both the geometry and the pose space. Deformable fine-scale details are generated from a second nonlinear mapping between the control points and per-vertex displacements. We apply our modeling scheme to scanned human hand models, scanned face models, face models reconstructed from multiview video sequences, and manually constructed dinosaur models. Experiments show that our deformation models, learned from extremely sparse training data, are effective and robust in synthesizing highly-deformable models with rich fine features, for keyframe animation as well as performance-driven animation. [pdf page] |

|

|

Robust Wrinkle-Aware Non-Rigid Registration for Triangle Meshes of Hand with Rich and Dynamic Details,

Ling Zhao, Xukun Shen, Xiang Long, Shape Modeling International 2012, Computers & Graphics, Volume 36, Issue 5. Abstract: Consistence of topology for 3D models with such rich details as pores and wrinkles is very important for performing higher level tasks like deformation and animation. In this paper, we propose a novel wrinkle-aware registration method, which aligns not only the large-scale poses, but also high-resolution deformable details, in a unified framework. Using large-scale alignment enables us to solve a template-based energy minimization problem through pure Euclidean measurement. Fine-scale features are aligned by firstly computing crest lines for both the intermediately registered mesh and the target, and then evaluating the shape descriptors at the feature points, followed by a graph matching procedure to achieve a global optimization of the closest point matching. Experiments show that our method is effective and robust in preserving the quality of the source, while matching the fine-scale details of the target. [pdf: size: 3M] |

|

|

Controllable Hand Deformation from Sparse Examples with Rich Details,

Haoda Huang, Ling Zhao, KangKang Yin, Yue Qi, Yizhou Yu, Xin Tong. ACM/Eurographics Symposium on Computer Animation 2011. Best Paper Award of SCA2011 Abstract: Recent advances in laser scanning technology have made it possible to faithfully scan a real object with tiny geometric details, such as pores and wrinkles. However, a faithful digital model should not only capture static details of the real counterpart but also be able to reproduce the deformed versions of such details. In this paper, we develop a data-driven model that has two components respectively accommodating smooth large-scale deformations and high-resolution deformable details. Large-scale deformations are based on a nonlinear mapping between sparse control points and bone transformations. A global mapping, however, would fail to synthesize realistic geometries from sparse examples, for highly-deformable models with a large range of motion. The key is to train a collection of mappings defined over regions locally in both the geometry and the pose space. Deformable fine-scale details are generated from a second nonlinear mapping between the control points and per-vertex displacements. We apply our modeling scheme to scanned human hand models. Experiments show that our deformation models, learned from extremely sparse training data, are effective and robust in synthesizing highly-deformable models with rich fine features, for keyframe animation as well as performance-driven animation. We also compare our results with those obtained by alternative techniques. [pdf: size: 9M] [video: size: 47M] |

|

|

|